Define a tool. Get a backend.

LocalFirst turns tool definitions into SQLite persistence, auto-migrations, CRUD, and AI-ready adapters — all from a single schema.

bunx localfirstdotsh init1const createReminder = defineTool({2 name: "create_reminder",3 input: z.object({4 title: z.string(),5 dueAt: z.string(),6 }),7 persist: { entity: "reminders", indexes: ["dueAt"] },8});910const app = createLocalFirst({ dbPath: "./app.db", tools: [createReminder] });11await app.migrate();12await app.execute("create_reminder", { title: "Ship v1", dueAt: "2026-04-01" });

Tool → database

The schema is your migration

CRUD generated

List, get, update, delete. Zero boilerplate

Works with any LLM

OpenAI, Anthropic, Gemini, Ollama, MCP

Local-first by default

SQLite, no network, no backend

Approval flows built in

Gate any tool behind a human-in-the-loop check

Define. Wire. Execute.

That's the entire backend. No database schema to design, no migrations to write, no API to build.

The tool definition is the schema. LocalFirst derives everything else.

1import { z } from "zod";2import { defineTool, createLocalFirst } from "@localfirstdotsh/core";34// 1. Define the tool5const logDecision = defineTool({6 name: "log_decision",7 description: "Record a decision with context and rationale",8 input: z.object({9 title: z.string(),10 context: z.string(),11 decision: z.string(),12 }),13 persist: { entity: "decisions" },14});1516// 2. Wire up the runtime17const app = createLocalFirst({18 dbPath: "./decisions.db",19 tools: [logDecision],20});21await app.migrate(); // table + indexes created automatically2223// 3. Execute — from your code or an AI model24await app.execute("log_decision", {25 title: "Hire contract vs full-time",26 context: "Q2 headcount tight",27 decision: "Contract for 3 months, revisit in June",28});

Three steps. Zero boilerplate.

Define a tool

Zod schema, persist config, optional handler

LocalFirst generates

SQLite table, migrations, CRUD API, AI-compatible descriptor

Execute from anywhere

Your app, an AI model, the CLI, or an MCP client

The database is a detail

Most stacks start with a database schema. You design tables, write migrations, build an API, then wire up your app.

LocalFirst starts with tools — the things your system can do. The database is derived from those definitions, not designed upfront.

When the tool changes, the migration is automatic. When you add a field, no schema file to update. No API layer to maintain.

You describe behavior. LocalFirst handles persistence.

Skip the ceremony.

Without LocalFirst

- Design database schema

- Write and manage migrations

- Build CRUD API

- Write validation layer

- Wire to AI tool format

- Handle approval logic separately

With LocalFirst

- Define tool

- Done

Works with your stack.

First-class adapters for the tools you already use.

Everything from the terminal.

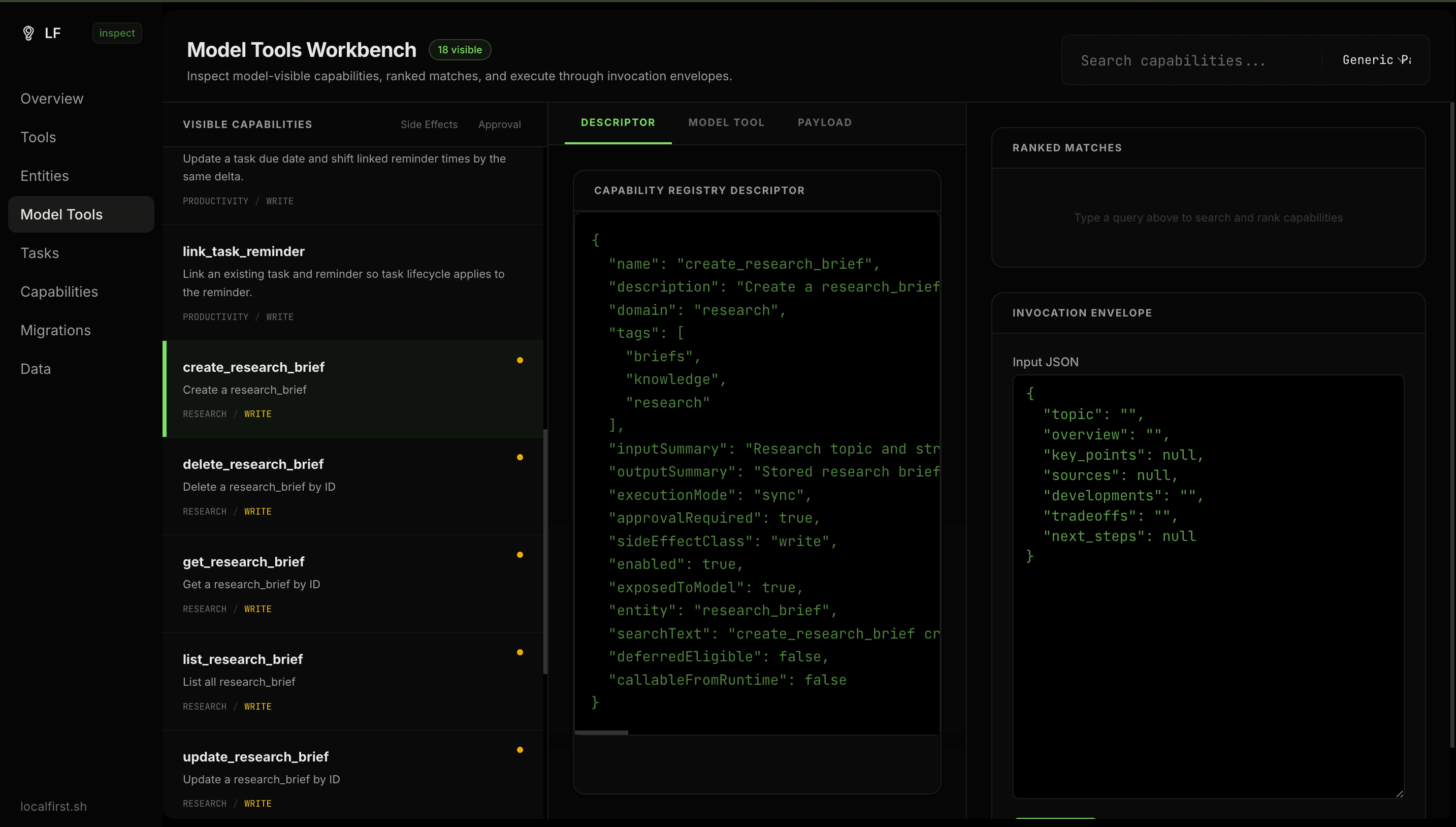

The dev command boots a full inspect workbench — browse tools, entities, data, tasks, migrations, approvals, and audit logs.

bunx localfirstdotsh init --example# Scaffold a working instance

bunx localfirstdotsh dev# Open inspect UI against your local db

bunx localfirstdotsh serve# Start an MCP server

bunx localfirstdotsh install claude# Wire into Claude Code

The Inspect Workbench

Built for real workflows.

AI memory

Persist everything an agent learns or decides, automatically

Decision logs

Build a searchable record of AI-assisted decisions

Research store

Structure and query notes, sources, and findings from any LLM session

CRM without a backend

Contacts, interactions, and follow-ups driven entirely by tools

Agent action history

Every tool call logged, indexed, queryable

Approval-gated automation

Let the model suggest, let the human confirm

For when you need more.

Production-grade features that grow with your use case.

Durable tasks

Queue long-running tool executions with retries, checkpoints, and cancellation

Approval workflows

manual, auto, or always_allow per tool or globally

Workflow graph

Resource links with cascade policies between entities

Schema migrations

Safe vs risky change detection, migration preview before apply

Audit logging

Every execution, approval, and rejection is recorded

Inspect UI

Full workbench bundled with the CLI

Start building local-first tools today.

No database setup. No API boilerplate. No backend.

bunx localfirstdotsh init --examplebun add @localfirstdotsh/core zod